2017/01/15

The Stratosphere Phenomenon

The Stratosphere Phenomenon was the semester report I turned in on Professor Charles Wharton’s Media Law, New Technology, and Constitutional Rights. It was completed under the stress of final and long before I had proper understanding to the U.S. legal system; but I realized it would be lost eventually in the digital age, and publishing would at least enable some random strangers to get some use out of it. Please feel free to share your thoughts and criticism on this amateur work.

「同溫層現象」是我在 105-1 修 Professor Charles Wharton 的「媒體法、新技術與憲法權利」寫的期末報告。最近在整理研究所的申請資料時,意識到這些不成熟的寫作內容終將塵封在歲月痕跡之中;將它們放到網路上,反而比較容易發揮點價值吧;還請路過的朋友不吝指教了。 (2018/10)

Preface

Internet has never been more dominant in real world lives. Mobile devices connect us with rest of the world on-the-fly, while cloud computing and real-time synchronization breaks the barrier between physical storage and data-centers with nearly infinite capacities. In lieu of newspapers, traditional agencies now compete directly with artists, independent journalists, YouTube broadcasters, friends and families and wherever those cat photos came from — all have their presence on social media. Information produced too fast for us to consume, thus the invention of various algorithms to show us the most relevant pictures and posts.

“Stratosphere Phenomenon” (同溫層現象) is how Taiwan’s netizens describe content filtering: in same altitude with little airflow convection, we could only seek warmth from those with familiar temperature, effectively limiting our field of view; fake news reports spread virally across the Internet, out of the reach of their correct counterparts. In this essay, we’ll explore why and how problematic comments would be censored, status quo and related controversies, and how an appealing model of Internet regulation could be imposed with freedom of speech in mind.

The Dark Side of Social Network

In the early days of Internet, Information got delivered in a decentralized manner. News propagated through mailing lists and news forums, while ancient “search engines” are nothing more than a category of available websites. Since the flow of information was relatively small, they were presented in their original form, sorted whether chronologically or alphabetically. Chaotic it seems, spam and abusive contents on the Internet were rare, while moderators could easily take down problematic content from server cache.

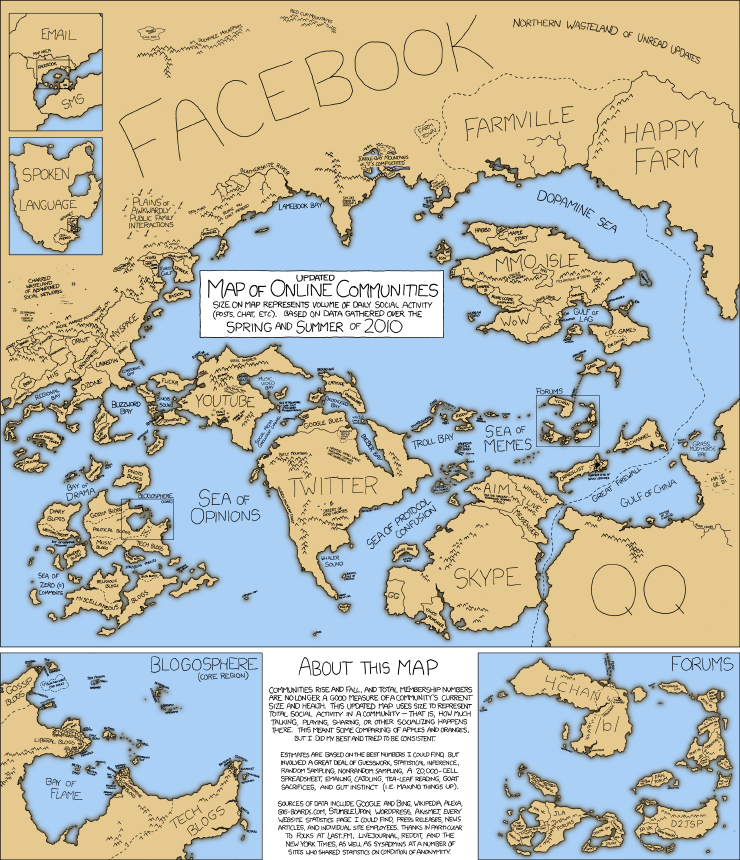

Year has since passed, and Internet today has grown beyond the control power of a single unified community. The concept of personalized web (a.k.a. Web 2.0) influenced the creation of social media websites, which quickly iterated and become the dominant communication service throughout the Internet. As of September 2016, there are 1.18 billion daily active users on Facebook1, while daily Twitter tweets have grown over 50 million in 20102. In order to keep pace with ever-increasing amount of speeches, social media websites present users with information they concern most — what individuals could see on their Social Network Service (SNS) are determined by suggestion algorithms, which is opaque to the general public.

Harmless as it seems, feeding users with favorable contents has posed serious impacts when it comes to public issues. Heavily biased news tend to oust neutral ones on popularity, ranking them with higher importance and visibility. Social network nowadays face challenges of extreme content3, propagators of hate have used the new medium as a powerful tool to reach new audiences, as well as to spread racist propaganda and incite violence offline.4

We used to count on “marketplace of ideas5” to drive false information out, but finding contrary perspectives now requires much, much more effort. Comparing the potential damage of problematic speeches with possible restoration action performable by the court, we might came agree that some kind of regulation need to be imposed, despite of its form.

“Report Abuse” mechanism

Current social media impose certain mechanism to bring down problematic comments, which is known as “report abuse.” Twitter, for example, accepts reports on pornography, impersonation, violent threats, spam, and other violations of Twitter Terms of Service6. Loosely-based on existing copyright infringement reporting mechanism (which dated back to 1996 Digital Millennium Copyright Act), they allow social network users to file complaint on specific account or post, which the service provider would later look upon and decide removal.

Upon decision, no mandatory reason would be presented to user whether the post were removed or not. Some criticized the reporting system as a potential way for social media companies to bring down contents in an unrestricted manner, e.g. social activists’ Facebook account closed without precaution, but the problem relies far beyond censorship on its own.

The unknown redline

The fundamental flaw of an reporting system is the opaqueness of violation standard. While the majority of problematic contents removed were obvious enough, like spam or bot-generated content, the platform could not exempt themselves from controversial “edge case.” How much violence should a post be classified as “violent threat?” Social media websites tried to play a neutral role on speech regulation, but turned out trapped in a position no better than a court under autocratic regime.

Even criteria that seems clear e.g. pornography face serious challenges. Free-the-Nipple movement participants got their account locked down by posting photographs containing nudity, while male with nipples exposed were perfectly fine7. The award-winning picture of Phan Thi Kim Phuc, known as the Napalm girl during Vietnamese War, were removed by Facebook also because of nudity8, and later restored in sequence of an global outcry9. Two significant cases demonstrates the hard-to-determine redline, which social media companies struggled their way — on which even U.S. Supreme Court haven’t succeeded further than “I know it when I see it.”

Privacy as its price

To further pose responsibility on users, an alternate approach to content regulation is to require users to provide their own identity. Chinese social media websites have been demanding real name for a long period, while Facebook recently asked part of its users to upload their identity proof. This could fundamentally harm the freedom of speech, as individual risks potential prosecution on expression in a police state.

Regulations takes place

Since the 2016 U.S. presidential election, scholars have pointed out the potential danger on content filtering. We value both the authenticity of facts and the freedom of expression, while traditional court system model does not apply on the scale of social media. Under these prerequisites, we may propose the following possible regulations:

First and foremost, on the problem of content filtering, an individual’s right “to be untampered” should be established on social network services. Consider a citizen born and lived in a digital era, an environment with diverse ideas is crucial, and companies should not be earning profit by exploiting the general public. One should be able to either turn off suggestions completely, or provided with options to switch to a side-by-side view of opinions contrary on social spectrum.

To deal with abuse procedure opaqueness, regulations need to be made to enforce social media companies present with their own transparency report. They should clearly state their standard on removal, number of removed posts, and of which percentage demanded by local governments.

Furthermore, in edge cases where the companies’ decisions were controversial, end users should be allowed to seek local courts to resolve the dispute, similar to an appeal procedure. The judicial system has far more familiarity with the restriction of speech and its possible consequence — we should not leave users stranded due to a lack of neutral third-party, but actively involved to protect their right to expression.

New media could same promote the idea of “marketplace of ideas”, just in a different way than traditional media. We have seen paradigm shift occurred once when newspapers adopt the idea of readers’ critique, where contrary ideas have their chance to make presence and enable choice.

Toward the era of Internet society

The problem of content filtering itself is the outcome of our own tendency on likes and dislikes. In an ever-evolving world with flattened hierarchy and borderless information access, lawmakers should get creative when facing Internet medium. Civil techies have already implemented fact checkers10 such as News Helper from the g0v community, and only more yet to come. How we encourage the common good of netizens, returning the power of Leviathan back to the mass public, would play a significant role on debate in the foreseeable future.

-

Facebook. (2016, September 30). Company Info [Newsroom stat]. Retrieved from http://newsroom.fb.com/company-info/. ↩

-

Kevin Weil (2010, February 22). Measuring Tweets [Blog post]. Retrieved from https://blog.twitter.com/2010/measuring-tweets. ↩

-

Mike Wendling (2015, June 29). What should social networks do about hate speech? BBC Trending. Retrieved from http://www.bbc.com/news/blogs-trending-33288367. ↩

-

Ben-David & Matamoros-Fernandez (2016). Hate Speech and Covert Discrimination on Social Media: Monitoring the Facebook Pages of Extreme-Right Political Parties in Spain. International Journal of Communication, 10, 1167–1193. ↩

-

「言論自由市場」,司法院大法官解釋釋字 414 號孫森焱大法官不同意見書、釋字 509 號吳庚大法官協同意見書參照。 ↩

-

Twitter. How to report specific types of violations [Help center answer]. Retrieved from https://support.twitter.com/articles/15789#specific-violations. ↩

-

Amar Toor (2016, October 12). Facebook still has a nipple problem. The Verge. Retrieved from http://www.theverge.com/2016/10/12/13241486/facebook-censorship-breast-cancer-nipple-mammogram. ↩

-

Julia Carrie Wong (2016, September 8). Mark Zuckerberg accused of abusing power after Facebook deletes 'napalm girl' post. The Guardian. Retrieved from https://www.theguardian.com/technology/2016/sep/08/facebook-mark-zuckerberg-napalm-girl-photo-vietnam-war. ↩

-

Sam Levin, J.C. Wong, & L. Harding (2016, September 9). Facebook backs down from 'napalm girl' censorship and reinstates photo. The Guardian. Retrieved from https://www.theguardian.com/technology/2016/sep/09/facebook-reinstates-napalm-girl-photo. ↩

-

g0v News Helper [Home page]. Retrieved from https://newshelper.g0v.tw/. ↩

留言